Last month I marched with 400,000 people in the streets New York City for the biggest climate demonstration in history. Yet we are never going to fix problems as scientifically, culturally, and economically complex as climate change unless we democratize data. Imagine if anyone could type their zip code in their browser and see how climate change will change their community? Or if any community around the world, no matter how small or poor, could do sophisticated hydrological assessments to prepare for coming changes? What if the 400,000 in New York last month could lobby their city planner or congressperson this month with localized climate impact information?

Our project seeks to actualize this potential for one threat: flooding. As a hydrologist and a climate change communications strategist, we believe the next innovation in Big Data will not be a scientific discovery or a more technical early warning system. The next breakthrough should challenge the dominant power dynamics and empower people by putting data into their hands.

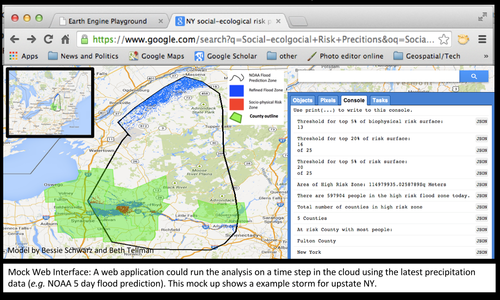

As the magnitude and frequency of US flooding increases, there is a need for faster, more precise, and more reliable flood vulnerability mapping that accounts for social and physical factors, predicts who is at risk from oncoming storms, and projects scenarios for a changing climate. Through the state of the art online geo-technology platform Google Earth Engine, our algorithm uses publicly available physical and social data to show governments, businesses and the public the science behind their vulnerability to disaster. The prototype of the model in 2013 draws on data in the cloud (including elevation data, satellite imagery, and census data) to dynamically refine a surface of risk inside a coarse resolution flood prediction zone from a weather service, typically NOAA.

This year, we are partnering again with Google Earth Outreach, Google Crisis Maps, and Data-Pop Alliance to use the model for climate change risk assessment and publicize the results in everyone’s Google Maps. Over the next year we will refine and validate the social and ecological predictors of vulnerability and convert the algorithm to the Google Earth Engine platform to make it available in the cloud. After this phase we will have a socio-ecological model that identifies hotspots of vulnerability. The second phase will input various climate predictions for average precipitation, population, emissions scenarios and more to adjust vulnerability in a changing climate and in different policy futures.

The final product will be a web interface that shows what flood events will look like in the future. The localized science and analysis will help individuals understand the climate crisis and take control when preparing and responding to hazards.

We could imagine the planner in Boulder Colorado using this model to show the importance of adaptation when rebuilding after their recent floods. We have spoken with an aid organization in Somalia who wishes to fortify communities from devastating floods but does no know which regions are most at risk. A citizen in a small town might log-on to notice that their risk to flooding will increase in the next 10 years and take additional preparation steps. Simply put, the application facilitates adaptation, preparation, risk communication.

Our model turns the common Big Data model on its head demanding that we focus on getting more data to people rather than just ingesting data from people. We hope this project will be a springboard for questioning our obsession with acquiring more and better data, as well what and who our current science and technology privileges.

![M002 - Feature Blog Post [WEB]](https://datapopalliance.org/wp-content/uploads/2025/10/M002-Feature-Blog-Post-WEB.png)